Abstract

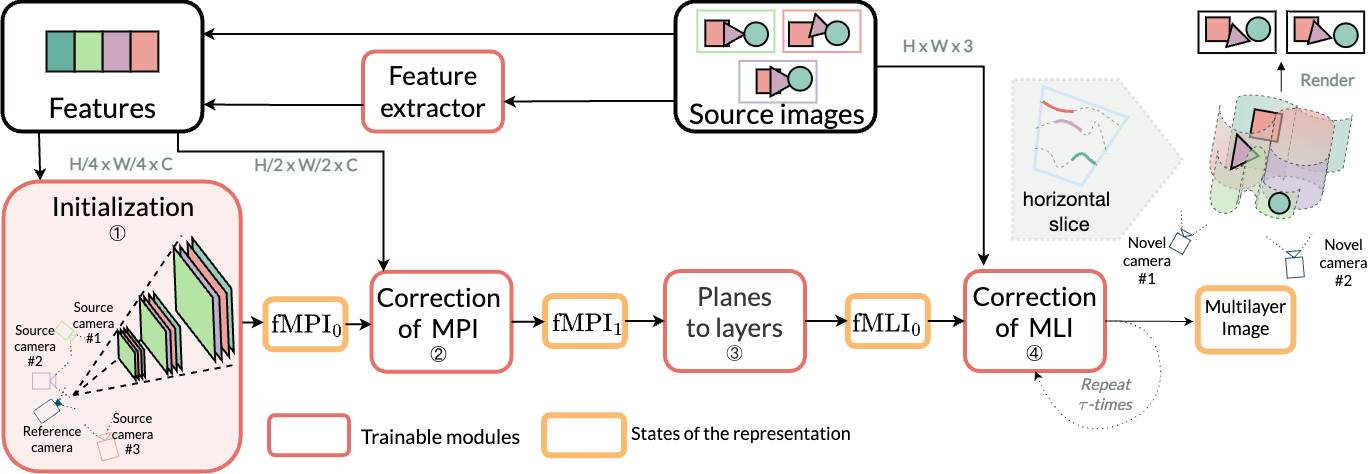

We present a new method for lightweight novel-view synthesis that generalizes to an arbitrary forward-facing scene. Recent approaches are computationally expensive, require per-scene optimization, or produce a memory-expensive representation. We start by representing the scene with a set of fronto-parallel semitransparent planes and afterward convert them to deformable layers in an end-to-end manner.

Additionally, we employ a feed-forward refinement procedure that corrects the estimated representation by aggregating information from input views. Our method does not require fine-tuning when a new scene is processed and can handle an arbitrary number of views without restrictions.

Experimental results show that our approach surpasses recent models in terms of common metrics and human evaluation, with the noticeable advantage in inference speed and compactness of the inferred layered geometry.

Related Works

- Stereo Magnification: Learning View Synthesis using Multiplane Images in SIGGRAPH 2018

- Immersive Light Field Video with a Layered Mesh Representation in SIGGRAPH 2020

- Deep Multi Depth Panoramas for View Synthesis in ECCV 2020

BibTeX

@inproceedings{Solovev2022simpli,

author = {Solovev, Pavel and Khakhulin, Taras and Korzhenkov, Denis},

title = {Self-improving Multiplane-to-layer Images for Novel View Synthesis},

booktitle = {The IEEE Winter Conference on Applications of Computer Vision (WACV)},

month = {January},

year = {2023},

}